DQO: Open-source Data Quality Operations Center

DQO is a powerful DataOps friendly data quality monitoring tool that is designed to help you monitor and maintain the quality of your data. With DQO, you get access to a wide range of customizable data quality checks and data quality dashboards that make it easy to keep an eye on your data and identify any issues that may arise.

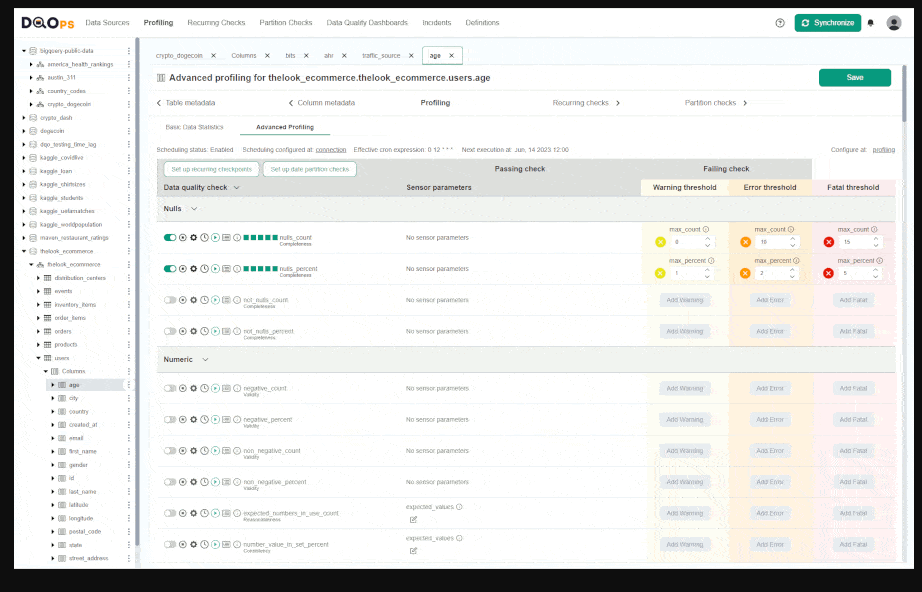

One of the key benefits of DQO is that it comes with around 100 predefined data quality checks that you can use right out of the box. These checks cover a wide range of data quality metrics, including completeness, accuracy, consistency, and timeliness. This means that you can start monitoring your data quality right away, without having to spend a lot of time configuring your monitoring setup.

In addition to its predefined checks, DQO also offers a range of customization options that allow you to tailor your data quality monitoring to your specific needs. You can easily create your own custom checks using DQO's intuitive interface, and you can also customize your data quality dashboards to display the metrics that are most important to you.

Overall, DQO is an essential tool for any organization that wants to ensure the quality of their data. Whether you are dealing with large volumes of data or just a few key datasets, DQO makes it easy to monitor and maintain your data quality, so you can be confident in the accuracy and reliability of your data.

Features

- Intuitive graphical interface and access via CLI

- Support of a number of different data sources: BigQuery, Snowflake, PostgreSQL, Redshift, SQL Server and MySQL

- ~450 build-in table and column checks with easy customization

- Table and column-level checks which allows writing your own SQL queries

- Daily and monthly date partition testing

- Data segmentation by up to 9 different data streams

- Build-in scheduling

- Calculation of data quality KPIs which can be displayed on multiple built-in data quality dashboards

- Incident analysis

Platforms

- Windows

- macOS

- Linux

Requirements

To use DQO you need:

- Python version 3.8 or greater (for details see Python's documentation and download sites).

- Ability to install Python packages with pip.

- Installed JDK software (version 17) and set the JAVA_HOME environment variable.

Install

To install DQO via pip manager just run

Windows

py -m pip install dqops

MacOS/Linux

pip3 install dqops

If you prefer to work with the source code, just clone our GitHub repository https://github.com/dqops/dqo and run

Run dqo app to finalize the installation.

Windows

dqo

MacOS/Linux

./dqo

Create DQO userhome folder.

After installation, you will be asked whether to initialize the DQO userhome folder in the default location. Type Y to create the folder.

The userhome folder locally stores data such as sensor readouts and checkout results, as well as data source configurations. You can learn more about data storage here.

Login to DQO Cloud.

To use DQO features, such as storing data quality definitions and results in the cloud or data quality dashboards, you must create a DQO cloud account.

After creating a userhome folder, you will be asked whether to log in to the DQO cloud. After typing Y, you will be redirected to https://cloud.dqo.ai/registration, where you can create a new account, use Google single sign-on (SSO) or log in if you already have an account.

During the first registration, a unique identification code (API Key) will be generated and automatically retrieved by DQO application. The API Key is now stored in the configuration file.

- Open the DQO User Interface Console in your browser by CTRL-clicking on the link displayed on the command line (for example http://localhost:8888) or by copying the link.

License

- Apache-2.0 license

Resources