Dify - Self Hosted Platform to Build AI Powered Apps

Table of Content

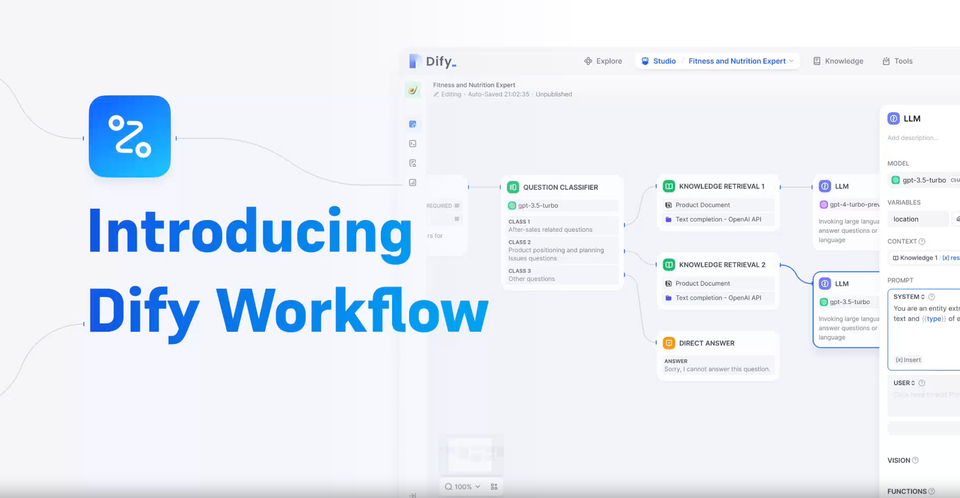

Dify is an open-source LLM app development platform. Its intuitive interface combines AI workflow, RAG pipeline, agent capabilities, model management, observability features and more, letting you quickly go from prototype to production.

It is a powerful framework designed to streamline web development. It provides a robust set of features that help developers create efficient, scalable, and maintainable web applications.

Features

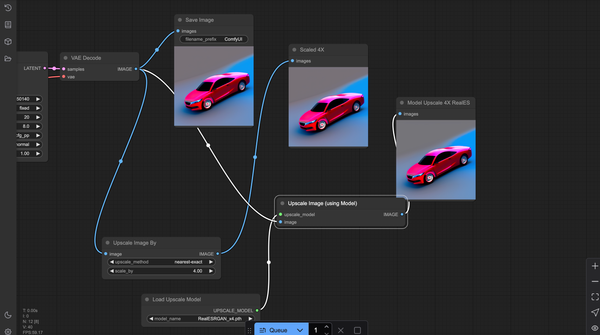

1. Workflow: Build and test powerful AI workflows on a visual canvas, leveraging all the following features and beyond.

optimized_workflow_intro.mp4

2. Comprehensive model support: Seamless integration with hundreds of proprietary / open-source LLMs from dozens of inference providers and self-hosted solutions, covering GPT, Mistral, Llama3, and any OpenAI API-compatible models. A full list of supported model providers can be found here.

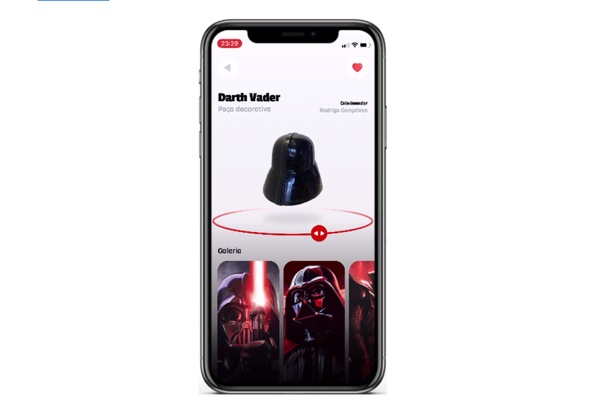

3. Prompt IDE: Intuitive interface for crafting prompts, comparing model performance, and adding additional features such as text-to-speech to a chat-based app.

4. RAG Pipeline: Extensive RAG capabilities that cover everything from document ingestion to retrieval, with out-of-box support for text extraction from PDFs, PPTs, and other common document formats.

5. Agent capabilities: You can define agents based on LLM Function Calling or ReAct, and add pre-built or custom tools for the agent. Dify provides 50+ built-in tools for AI agents, such as Google Search, DELL·E, Stable Diffusion and WolframAlpha.

6. LLMOps: Monitor and analyze application logs and performance over time. You could continuously improve prompts, datasets, and models based on production data and annotations.

7. Backend-as-a-Service: All of Dify's offerings come with corresponding APIs, so you could effortlessly integrate Dify into your own business logic.

License

This repository is available under the Dify Open Source License, which is essentially Apache 2.0 with a few additional restrictions.