data science

SmallPond: The Lightweight Data Processing Framework That Makes Big Data Feel Simple

smallpond is a A lightweight data processing framework built on DuckDB and 3FS.

Open-source data science application

data science

smallpond is a A lightweight data processing framework built on DuckDB and 3FS.

research

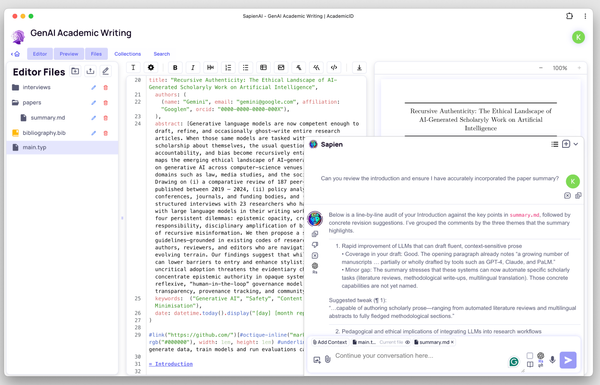

What is SapienAI? SapienAI is a self-hosted academic chatbot and research workspace. It unifies the latest models from OpenAI, Anthropic, Google, and self-hosted Ollama models into a single, secure interface. Its killer features include realtime audio chat, 100% local data storage, academic paper integration, semantic search, and dedicated research spaces

data engineering

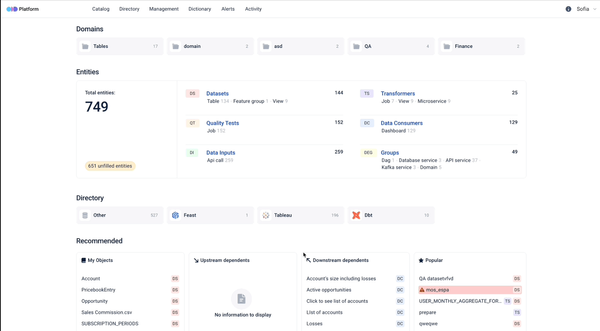

What is ODD or Open-Source Data Discovery? ODD (Open-Source Data Discovery) is a powerful, self-hosted, open-source tool designed to help data teams streamline and democratize access to data. It cuts down the time spent searching for data by providing a modern, intuitive interface that makes discovering datasets fast and easy.

data science

A powerful, open-source scientific calculator with intuitive math-like syntax — effortlessly handle user-defined variables and functions, differentiation, integration, complex numbers, and more.

data science

Numbat: The Programming Language That Catches Unit Errors Before You Do (A Friendly Developer’s Guide)

Open-source

If you’ve ever stared at a spreadsheet wondering how to turn your chaotic financial data or project metrics into something meaningful, you’re not alone. Most tools either lock you into expensive SaaS subscriptions, drown you in complexity, or lack the flexibility to handle both data visualization and real-world

List

What Is AI Data Visualization, And Why Does It Matter, especially Now? Nowadays, AI-powered data visualization isn’t just a nice-to-have, it’s the secret weapon behind smarter decisions. Gone are the days of static charts and manual Excel reports. Today, we’re using artificial intelligence to turn raw numbers

List

If you're a developer, data engineer, software engineer, or part of a data analytics or data science team, chances are you’ve spent hours sifting through logs, sometimes in frustration. That’s where a JSON Log Viewer comes into play, and understanding what it is, why it matters,

log files

As Log files are everywhere, from application servers and cloud platforms to databases and IoT devices. As systems scale, so do their logs. It's not uncommon to encounter multi-gigabyte JSON dumps, 35GB XML exports, or compressed log archives that traditional text editors can't even open. This

web development

TileDB is a versatile and high-performance engine designed for managing both dense and sparse multi-dimensional arrays. It serves as an efficient solution for modeling complex datasets across various domains. It is built as an embeddable C++ library, TileDB operates seamlessly on Linux, macOS, and Windows platforms. TileDB is widely used

Artificial Intelligence (AI)

What is JamAI Base? JamAI Base is an innovative, open-source platform designed to simplify the development of AI-driven applications using Retrieval-Augmented Generation (RAG). At its core, RAG combines the power of retrieval-based systems with generative models, enabling more accurate and context-aware responses. It works seamlessly with many frameworks such as

database

Explore 18 open-source distributed database solutions, understand what makes them essential for developers, and discover real-world use cases. Unlock scalability, resilience, and performance for your next project!