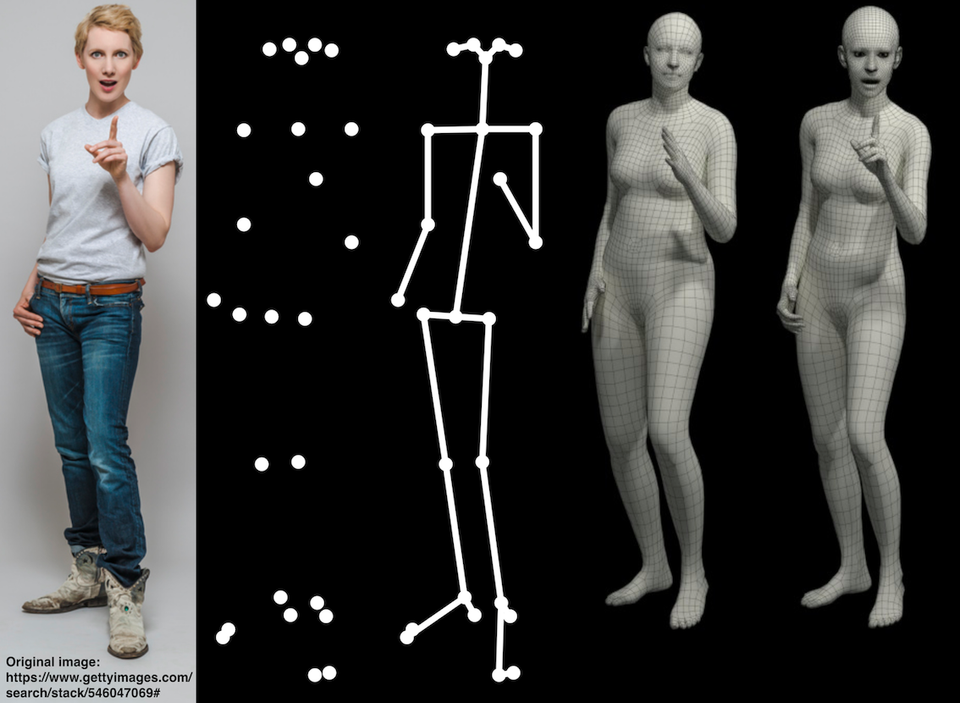

SMPLify-X: Generate an accurate 3D model from single image

SMPLify-X is an open-source project that creates an expressive 3D body capture with detailed hands, face, and body from a single image. This project is a result of a research of 7 researchers and computer scientists at Max Planck ETH Center for Learning Systems.

The researchers train AI to rich dataset to provide detailed 3D reconstruction that recognize human body pose, hand gestures, and facial experssion. Unlike other methods which are using multiple cameras to reconstruct 3D model, SMPLify-X uses only one image as an input.

The team uses large dataset of 3D scans to produce expressive realistic model. They created a body pose prior that is based on a large dataset of thousands of body pose models.

The project uses PyTorch (an open source machine learning framework), and PyRender (a lightweight Python library for 3D rendering and visualization which is also open-source licensed under MIT license).

The Project also uses VPoser which is a variational human body pose prior by the same researchers. It's released also for free for non-commercial use.

Features

- 2D body pose detector with support of body keypoints, hand keypoints, and facial landmarks.

- Using detailed collision-based model for meshes.

- Gender classification.

- EHF (Expressive Hand Face) dataset for evaluation.

- PyTorch implementation achieves a speedup of more than 8x over Chumpy.

License

The source code is released for free for non-commercial scientific research purposes. Contact [email protected] for commercial licensing (and all related questions for business applications).

Project Disclaimer

The original images used for the figures 1 and 2 of the paper can be found in this link. The images in the paper are used under license from gettyimages.com. We have acquired the right to use them in the publication, but redistribution is not allowed. Please follow the instructions on the given link to acquire right of usage. Our results are obtained on the 483 × 724 pixels resolution of the original images.